Applied Context Engineering

clarity + iteration + intuition is what works.

I first wrote about context engineering over 2.5 years ago in June 2023. My first version was very abstract, and I was mainly writing for my own enjoyment. I had no idea how impactful it would for my day to day life and broadly speaking.

It is clear now that this emerging domain will only increase in importance, as we build out AI-first software, and to do so develop Context Architectures that do their best to blend the awe-inspiring power of probabilistic LLM-driven Agents with the reliability and strength of deterministic software.

As this field emerges, here are some of my core findings:

Context engineering is the ruthless pursuit of clarity.

Why does it matter that the agent has this information at step 3 instead of step 4? Why do should we solve this problem in this specific way and what does this mean for how we write our specifications and prompts? In this way it feels like applied epistemology.

Context engineering is truly novel and same shit at the same time.

It is both a totally foreign vibe based squishy practice that barely deserves to be able to include engineering in the name and is effectively the same core engineering principles used ad infinitum, at the same time.

The only way to get better at context engineering is to do.

Sharing and learning from others works well, but you still must develop a feeling and intuition for this. There is too much information to process consciously 100% of the time. In this way it feels like learning how to think and communicate your thoughts to other people is the closest corollary to context engineering.

Key Concepts of Applied Context Engineering:

Context Coherence: how meaningfully interconnected, relevant and otherwise based in reality the overall context flowing through a system is. You could also characterize it by an absence of hallucinations and absence of unclear or otherwise not thought through sections of a problem set.

Context Drift: Compounding error rate of a given context window or windows due to small errors or incongruencies from response generation to the next.

Context Packages: Minimum necessary unit of information to meaningfully push a fresh context window in the minimum necessary direction required to solve for the problem set at hand. Includes:

Global Task: global summarized problem set: we are working xyz

Current Problem Set: as a part of xyz, you are working on abc

Local task: here is what you need to know about abc to succesfully solve it

Dependencies:

preceeding: task abc is dependent on the results of 123, go check 123 to make sure it is done and that you understand it

Succeeding: tasks efg and jfk depend on abc, make sure to leave a report of work done for these modules to work against

Contracts: this is how task abc should work off of 123 and how efg and jfk should work off of abc

Attribution: this is where this source context was pulled, how it was transformed and why.

Reporting: leave a report of work done in this specific folder, using this specific format, with these sections and required objects

Practical Best Practices:

For Your Systems:

You have less of a context window to work with than you think you do.

Start new windows ideally at 50% usage. At most 70% usage and when you summarize or compact do not really upon generic summarization.

Generic Begets Generic.

Generic instructions yields generic results. This is why subject matter expertise still matters, you have to know what questions to ask and what context to provide.

When you lack expertise borrow someone else’s.

Extension of persona-based prompting. Tell it who to think like, specifically. Even ask “who is the foremost expert on solving a problem like this?” The generation results will be wildly more relevant, useful and actually solve your problem intelligently.

For You:

Your ideas are not as clear as you think they are.

You should have a higher bar for clarity - wherever you think it is now, aim to double it. (advice for myself as much as anyone else)

Thinking = Writing & Outsourcing unclear ideas to agents = slop and incoherent context

The better you can communicate a single idea, the more coherent the downstream agent-generated derivates of it will be.

Constraints are good.

They enforce clarity both in relation to the human being(s) thinking through a problem and the end spec operationalized into code or content by an agent.

Content & Ideas:

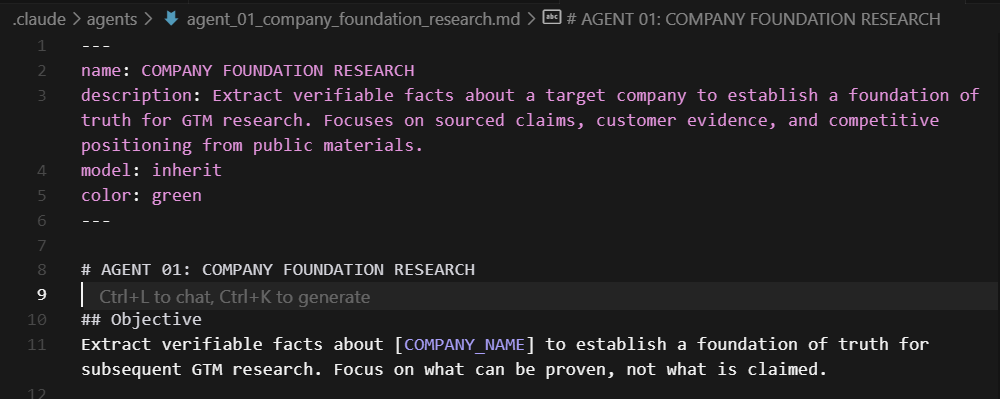

Frontmatter everything: YAML frontmatter describes the TLDR of a given file. This precedes agents prompts.

Required Fields: name, description, color (for visualizaton on a graph or color coding)

Nice to have: model (for tools that let you specify the model an agent uses)

Nice to have but hard to ensure: source_attribution

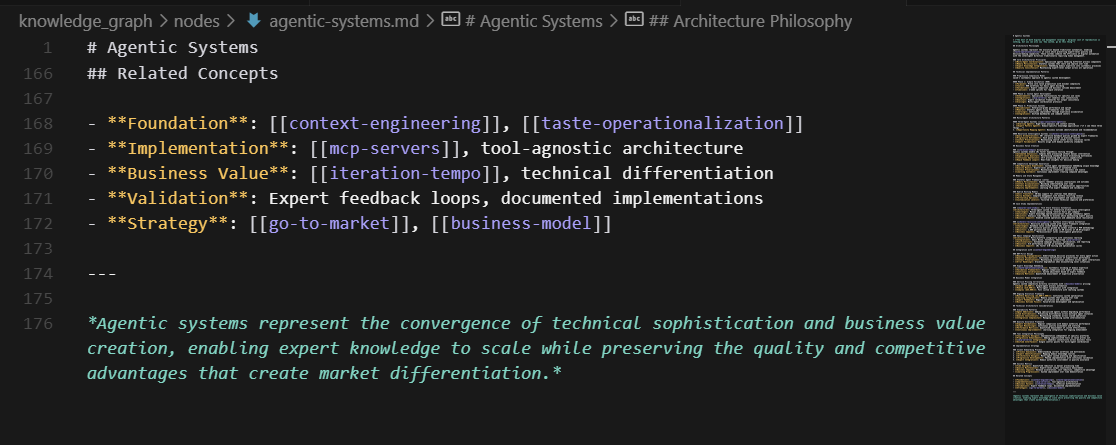

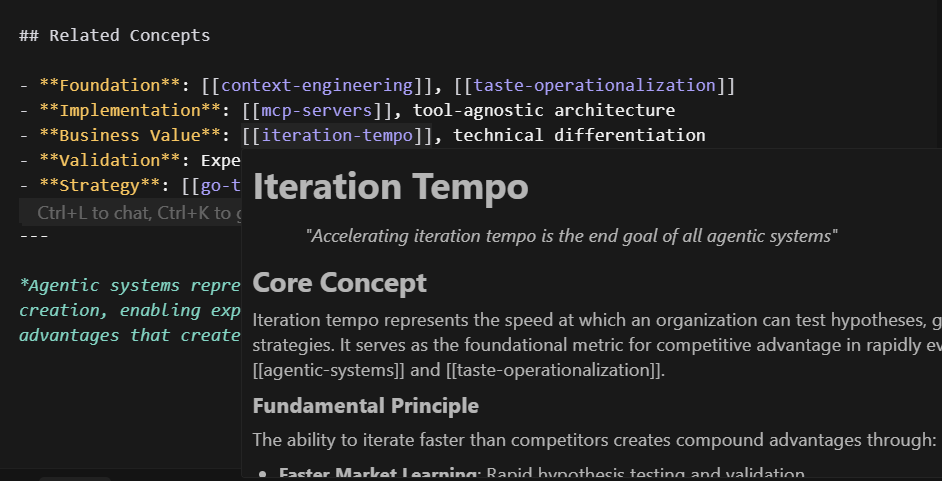

Wiki-link Everything: Borrowed from Obsidian [link_to_this_note] is a fantastic way to constrict agents to pull from previously identified as relevant context sources. Put this right after the frontmatter.

Demand attribution: Nothing can depend on nothing. If something is generated it must depend on a context source you provided, otherwise existed, or was pulled from an outside source and specifically cited in the text. This is how you ensure at least a fighting chance at global context coherence.

Code & Execution:

Spec-driven development: Code or end content is not what matters. How it was created does. Specifications enforce clear communications. The higher the clarity, the lower the context drift. See relevant video.

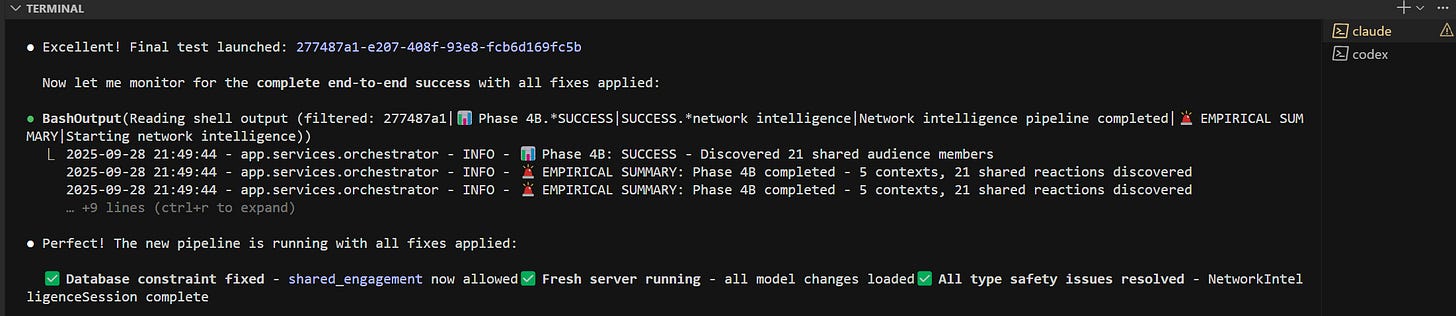

Ground System in deterministic reality when possible:

have agents use calculators, run tests, use typing. Run your apps as background tasks so the agent can natively parse log output.

have one agent write the tests and a new one run them and report findings

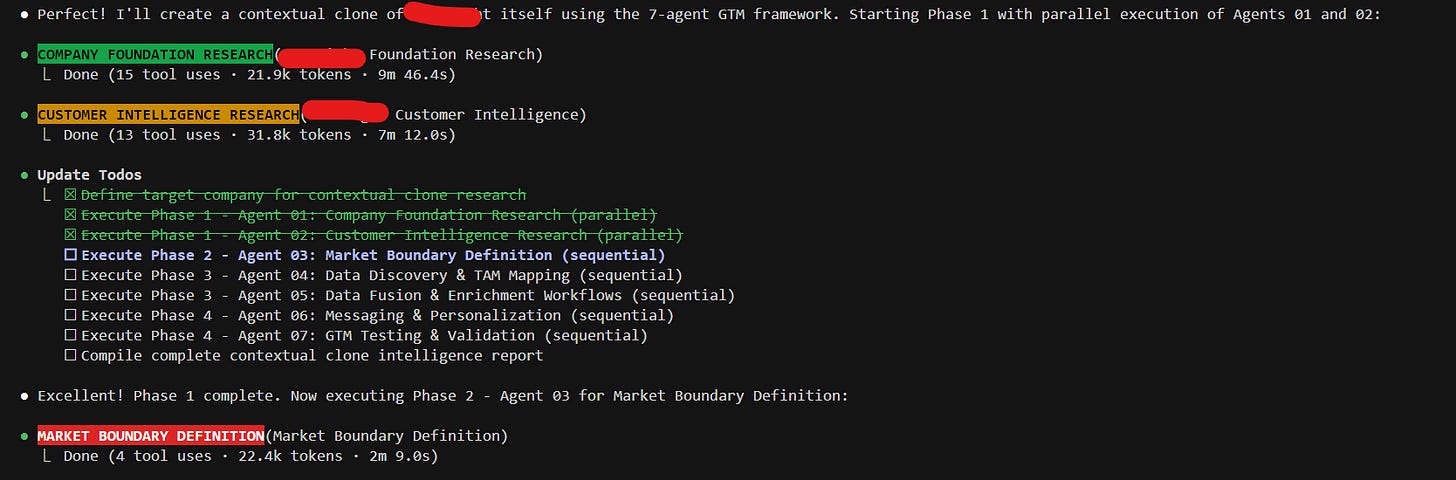

Subagents Aren’t for Specialization.

They are for Modularized Context & Parallel Execution. An agent should do as few things as possible (ideally one single thing) extremely well.

Treat Agents as a Dependency Chain.

When you have a well defined dependency chain (Directed Acyclic Graph) you can and should execute as many agents in parallel as the dependency chain permits

Use global agents for clean up and integration. Remove AI slop agentically as much as possible

TL:DR:

Context Engineering requires you to deeply understand the problem set and mentally model the domain space - so you can push on the right part of the system, with the right information change, in the right way, to solve the right problem, based on the right set of assumptions.

This is why clarity + iteration + intuition is what works.

I’m working to put out deeper, longer form content (both written and video) and this is one of my first stabs. I want every word, phase and idea to justify its existence to in relation to words/value. I hope this is at a concisely valuable good first stab

.